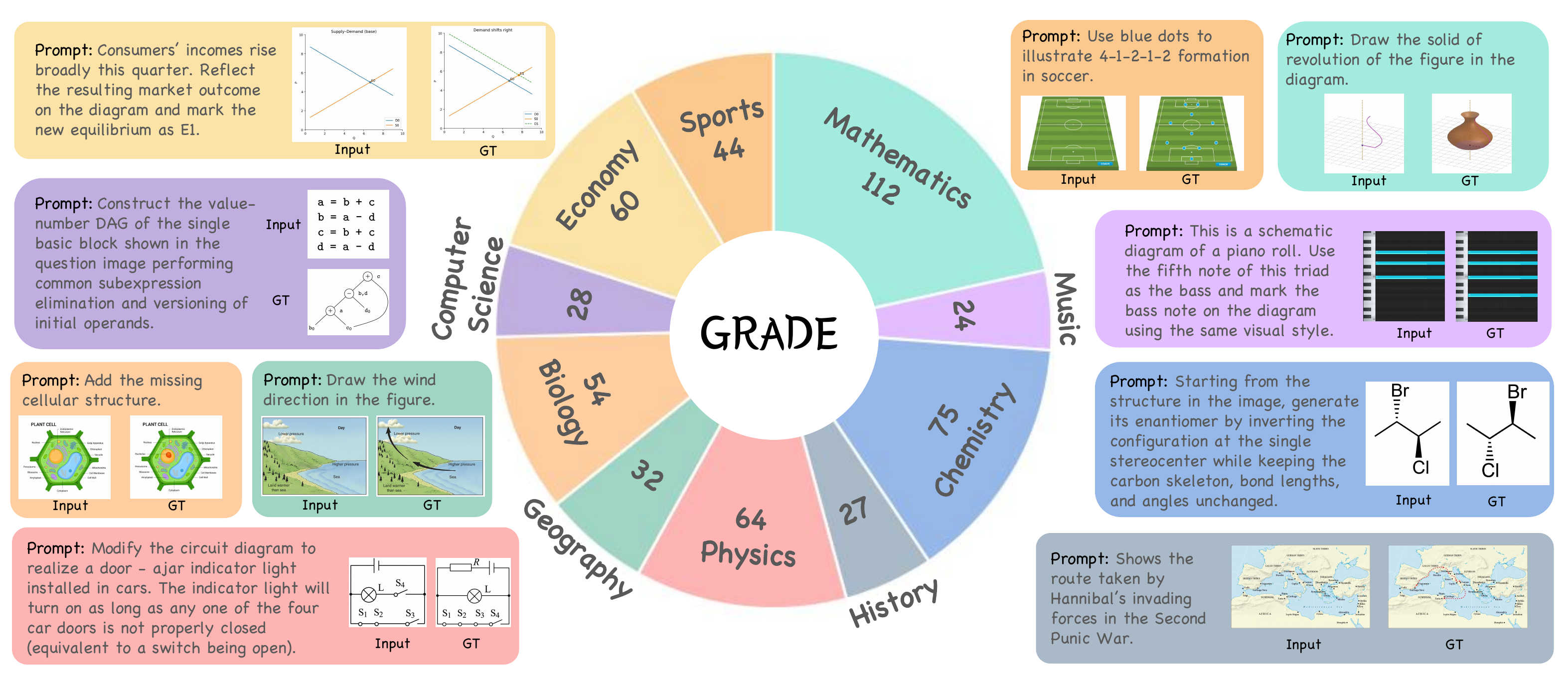

GRADE is the first benchmark for evaluating discipline-informed knowledge and reasoning in image editing. It comprises 520 carefully curated samples across 10 academic domains — from natural science to social science — and provides a multi-dimensional automated evaluation protocol that jointly assesses Discipline Reasoning, Visual Consistency, and Logical Readability.

Distribution of 520 samples across 10 academic disciplines with fine-grained sub-categories.

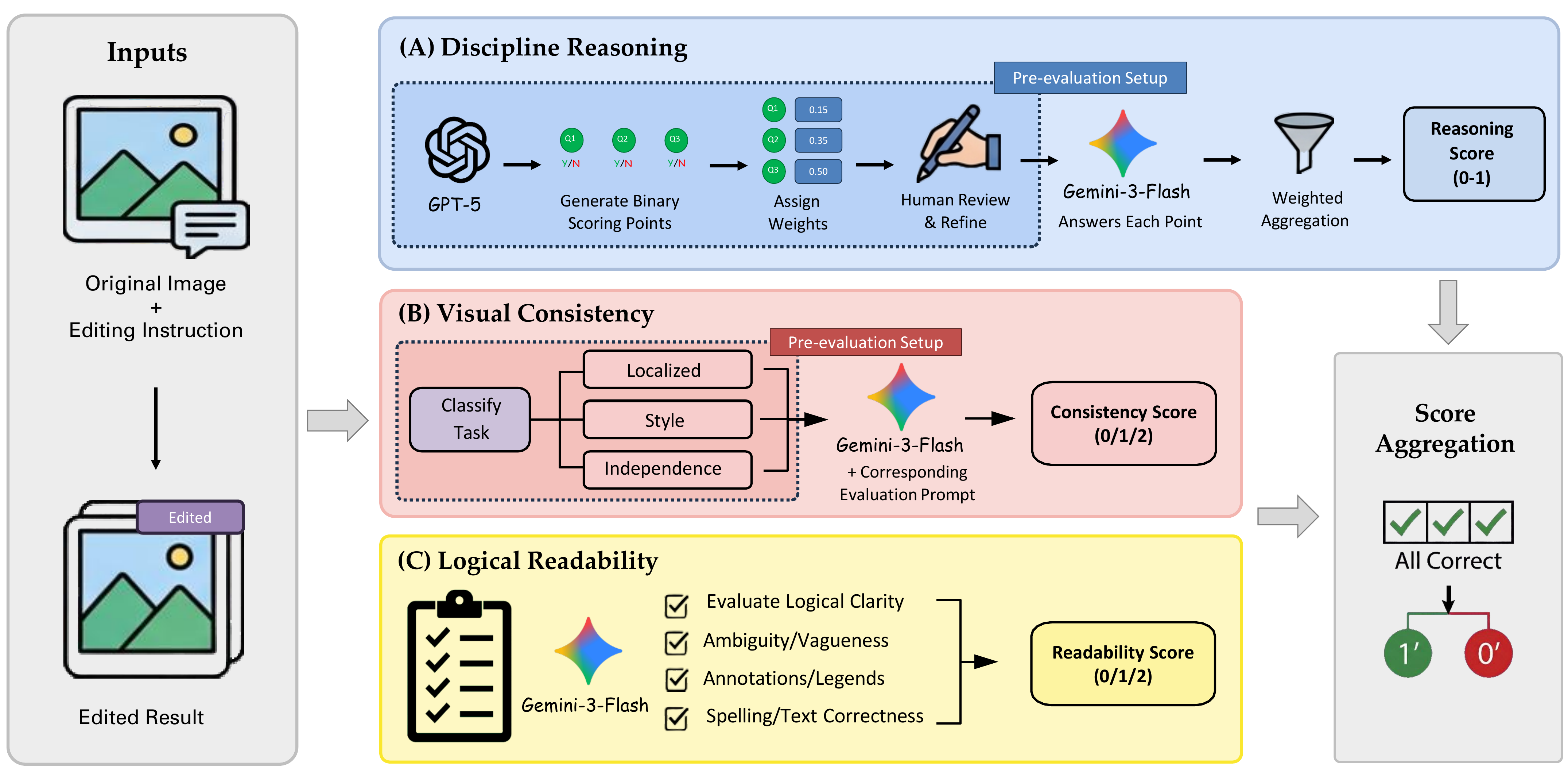

Evaluates whether edited results correctly reflect underlying discipline knowledge through a structured QA-guided VQA protocol. Weighted binary scoring points, score range [0, 1].

Assesses whether edits blend consistently with expected visual structure. Three task-related types: Localized, Style, and Independence. Score {0, 1, 2}.

Evaluates whether the edited image presents discipline content in a clear, logically coherent, and interpretable form. Score {0, 1, 2}.

A sample is considered passed only when all reasoning questions are answered correctly, and both Visual Consistency and Logical Readability receive full marks.

A weighted combination of three dimensions: Reasoning contributes 60%, Consistency contributes 30%, and Readability contributes 10%, all normalized to a 0–100 scale.

| Model | Reasoning | Consistency | Readability | Accuracy |

|---|---|---|---|---|

| Proprietary Models | ||||

1Nano Banana Pro | 77.5 | 89.5 | 95.8 | 46.2 |

2Nano Banana 2 | 72.6 | 86.4 | 95.9 | 39.6 |

3Seedream 5.0 | 64.1 | 87.5 | 90.6 | 24.7 |

4GPT-Image-1.5 | 54.5 | 82.3 | 90.7 | 16.0 |

5FLUX.2 Max | 47.8 | 67.2 | 68.6 | 11.9 |

6Nano Banana | 42.2 | 75.1 | 82.0 | 9.0 |

7Seedream 4.5 | 41.3 | 55.6 | 82.1 | 6.9 |

8GPT-Image-1.0 | 44.0 | 65.2 | 82.3 | 6.0 |

9FLUX.2 Pro | 38.9 | 55.5 | 70.3 | 4.4 |

10Seedream 4.0 | 32.4 | 53.2 | 77.0 | 3.1 |

| Open-Source Models | ||||

11Qwen-Edit-2511 | 18.6 | 45.2 | 52.1 | 2.7 |

12Step-1x (think+reflect) | 19.2 | 57.2 | 66.9 | 2.3 |

13Step-1x (think) | 17.6 | 56.3 | 68.2 | 1.4 |

14DreamOmni | 17.4 | 83.2 | 89.1 | 1.0 |

15Step-1x | 17.3 | 52.8 | 63.7 | 1.0 |

16Bagel | 15.2 | 58.6 | 69.8 | 0.6 |

17Bagel (think) | 15.6 | 54.8 | 67.8 | 0.2 |

18ICEdit | 9.8 | 33.2 | 56.5 | 0.2 |

19FLUX.2 dev | 11.3 | 17.6 | 49.6 | 0.2 |

20OmniGen | 9.7 | 33.6 | 51.6 | 0.0 |

Select a discipline and sub-category to view sample editing tasks with grading rubrics. Click on images to enlarge.